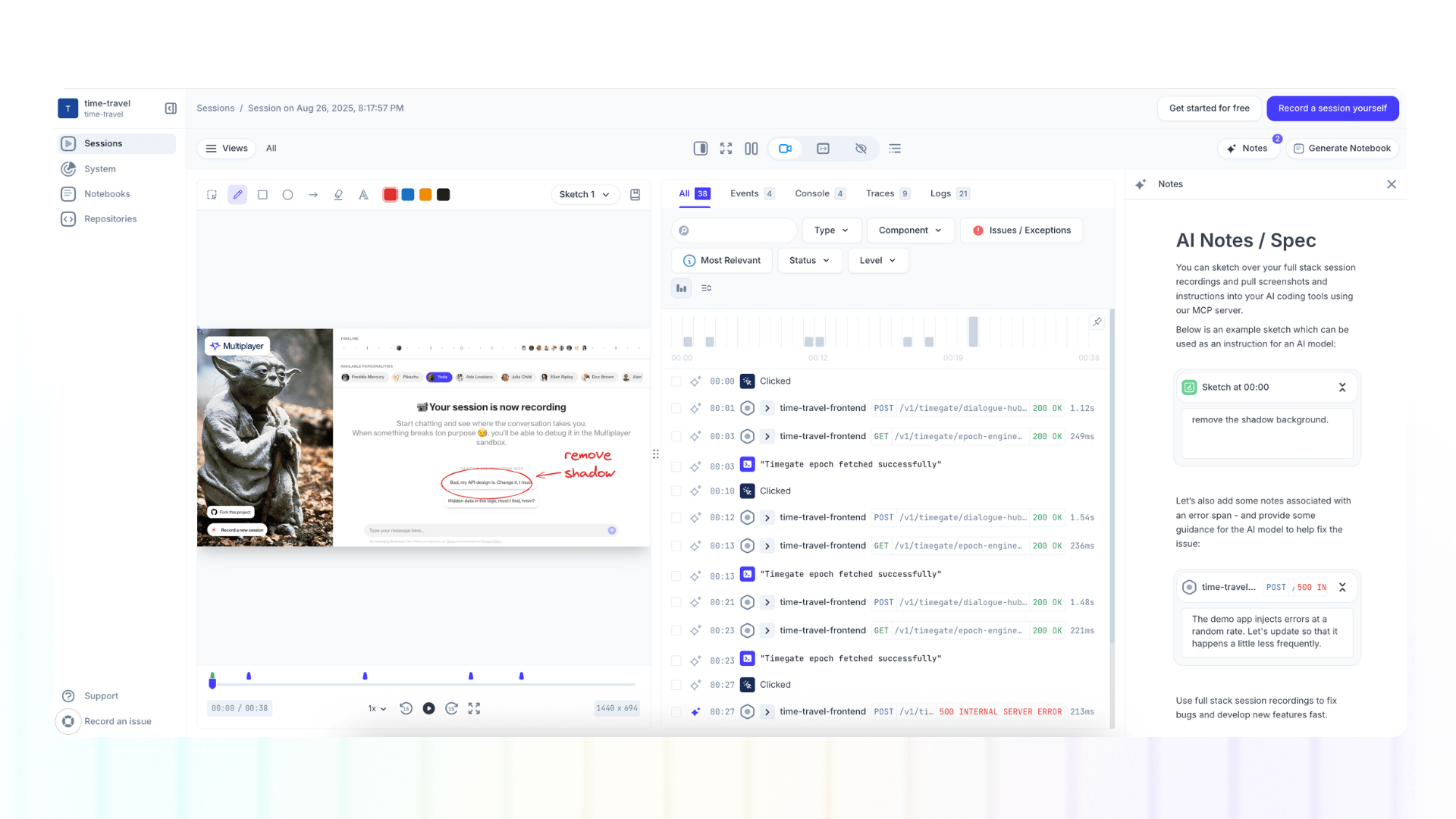

Multiplayer sketches: annotating session recordings for better collaboration

Annotations are a way to draw, write, and comment directly on top of full-stack session recordings. Now, instead of sketching ideas in isolation, teams can mark up actual user sessions, highlighting specific UI elements, API calls, and backend traces that need attention.

Whiteboarding tools are indispensable in system design for visually conveying concepts, ideas, and rough plans. They tap into our natural preference for visual learning. Most people, after all, agree that "a picture is worth a thousand words."

But static whiteboarding tools lack the crucial element that makes feedback truly actionable: context.

That's why we evolved our Sketches feature into Annotations, a way to draw, write, and comment directly on top of full-stack session recordings. Now, instead of sketching ideas in isolation, teams can mark up actual user sessions, highlighting specific UI elements, API calls, and backend traces that need attention.

Why Annotate Session Recordings?

Multiplayer automatically captures everything happening in your application: frontend screens, user actions, backend traces, metrics, logs, and full request/response content and headers. But when something goes wrong or needs improvement, pointing at the exact moment and explaining what should change requires more than just text.

Annotations let you:

- Draw directly on the replay with shapes, arrows, and highlights to mark problem areas or desired changes

- Add on-screen text to explain intended behavior or specify new UI copy

- Attach timestamp notes to clarify reproduction steps, requirements, or design intentions

- Reference full-stack context by annotating user clicks, API calls, traces, and spans directly

Because Multiplayer auto-correlates frontend and backend data, your annotations aren't just surface-level markup: they're tied to the actual technical events that need investigation or modification.

How Support Teams Use Annotations

1. Clarifying Bug Reports

When a customer reports confusing behavior, support teams can create an annotated recording that shows:

- Red circles highlighting where the UI behaved unexpectedly

- Arrows pointing to the button that should have appeared

- Text annotations explaining what the customer expected to see

- Timestamp notes marking the exact API call that returned the wrong data

This annotated session becomes a complete bug report that engineering can understand immediately. No back-and-forth required.

2. Documenting Reproduction Steps

Instead of writing lengthy reproduction steps like "Click the dashboard, then filters, then date range, then apply," support can:

- Record themselves reproducing the issue once

- Add timestamp notes at key moments: "User opens filters here," "Selects invalid date range," "Error appears at 0:45"

- Highlight the error message in red with a note: "This message is confusing. We should clarify valid date format"

Engineering gets a visual, interactive guide to the problem with full backend context included.

3. Collecting Feature Requests with Visual Context

When customers suggest improvements, support can annotate recordings to show:

- Green highlights around areas customers want enhanced

- Sketched mockups showing proposed layouts

- Text annotations with customer quotes about desired behavior

How Engineering Teams Use Annotations

1. Reviewing PRs with Visual Feedback

During code review, engineers can record themselves testing a new feature and add annotations:

- Yellow boxes around UI elements that need spacing adjustments

- Arrows indicating where loading states should appear

- Text specifying exact pixel values or color codes

- Timestamp notes on API calls: "This endpoint takes 2.3s, should we add caching?"

The developer receives actionable visual feedback tied to actual runtime behavior, not abstract suggestions.

2. Debugging with Annotated Evidence

When investigating production issues, engineers can:

- Record a session where the bug occurs

- Circle the problematic UI element in red

- Add arrows pointing from the frontend error to the failing API trace

- Annotate the trace span with notes: "This database query times out under load"

This creates a self-documenting investigation that other team members can follow.

3. Planning Refactors with Visual Context

Before refactoring complex flows, teams can:

- Record the current user journey

- Use different colored annotations to map out different concerns (blue for performance, purple for UX improvements, orange for tech debt)

- Add timestamp notes explaining why each step exists

- Sketch the proposed new flow directly on top of the recording

- Reference specific API calls and traces that will be affected

4. Onboarding New Engineers

Senior engineers can create annotated recordings that serve as interactive documentation:

- Record a typical user flow

- Add green annotations explaining key architectural decisions

- Highlight important code paths with timestamp notes

- Mark API boundaries and service interactions

- Sketch out related system components and their relationships

New team members can pause, replay, and reference the full-stack context as they learn.

👀 If this is the first time you’ve heard about Multiplayer, you may want to see full stack session recordings in action. You can do that in our free sandbox: sandbox.multiplayer.app

If you’re ready to trial Multiplayer you can start a free plan at any time 👇